This paper explains why simply blurring faces does not truly protect privacy. It shows that modern AI can still recover identity. The research presents new methods that change a person’s identity while keeping facial actions like expression and gaze, making face data safer to use.

Face de‑identification has moved from a peripheral compliance concern to a central infrastructural problem in data‑driven industries. Across autonomous driving, smart cities, retail analytics, public safety, and surveillance, image and video pipelines increasingly depend on facial information while simultaneously operating under expanding privacy obligations. The doctoral thesis “Non‑Reversible and Attribute Preserving Face De‑Identification” by Felix Rosberg situates this tension precisely at the intersection of computer vision capability and data protection constraints, offering a detailed technical account of how contemporary generative models both enable and endanger privacy claims when applied at scale.

The thesis advances a central proposition that is quietly reshaping the field: effective facial anonymization can no longer be evaluated solely by whether a human observer recognizes a person, nor even by whether a single face recognition system fails to match an identity. Instead, anonymization must be understood as a multi‑dimensional transformation problem that simultaneously preserves task‑critical facial attributes while resisting a widening class of algorithmic attacks. This framing has consequences not only for system designers, but also for regulators, auditors, and standardization bodies attempting to define what anonymized visual data actually is in practice.

From Destructive Obfuscation to Generative Substitution

Traditional face anonymization methods remain widespread in industrial deployments. Blurring, pixelation, and full masking are still the default approaches used by camera vendors, transport authorities, and media organizations. Their appeal is simplicity, explainability, and low computational cost. Their weakness is equally evident. These methods systematically destroy spatial and semantic information that modern vision systems rely on, including gaze direction, facial pose, expression dynamics, and even the categorical fact that a face is present.

Rosberg’s work situates this limitation within a broader historical shift. Deep learning-based perception systems used in advanced driver‑assistance systems, driver monitoring, pedestrian intent modelling, and human‑machine interaction are not merely consuming image data but learning from subtle facial cues. Empirical findings cited in the thesis, such as the influence of pedestrian eye contact on driver behaviour, illustrate how facial attributes materially affect downstream safety metrics. In this context, destructive anonymization alters the data distribution itself, leading models to learn on artifacts rather than on representative human behaviour.

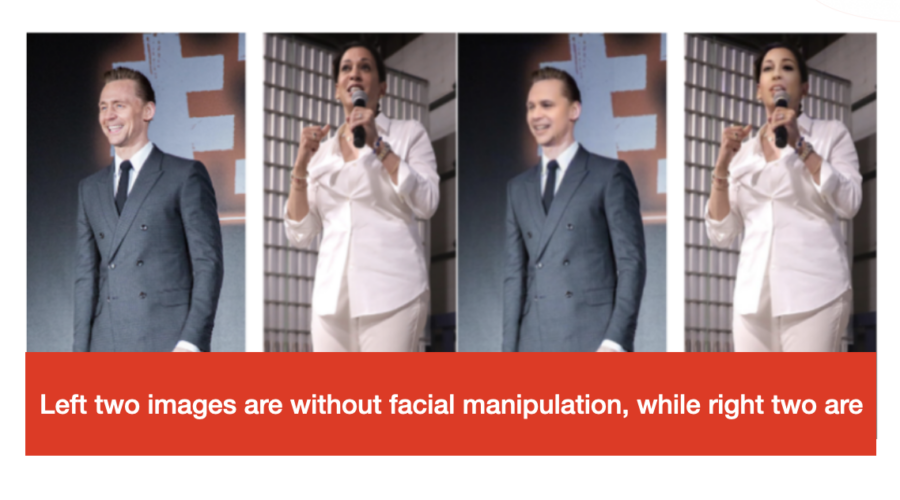

This observation motivates the turn toward replacement‑oriented de‑identification, where identity is changed rather than removed. In contemporary literature, this includes face swapping, conditional identity manipulation, GAN‑based inpainting, and diffusion‑driven synthesis. Among these, target‑oriented identity manipulation emerges as the dominant approach due to its ability to preserve pose, lighting, and expression directly from the input image while altering identity in a learned embedding space.

Target‑Oriented Models and the Identity Embedding Boundary

At the core of Rosberg’s technical analysis is the modern face recognition embedding. Systems such as ArcFace, CosFace, ElasticFace, and AdaFace dominate both academic benchmarks and commercial offerings, including those used by global vendors in access control and identity verification. These models project faces onto a normalized hypersphere where angular distance encodes identity similarity. This geometric design choice underpins their robustness and accuracy, but it also introduces an unexpected constraint for anonymization.

When de‑identification systems condition generative models directly on these embeddings, they inherit the structure of the identity space. Maximizing the distance between the original and generated identity can paradoxically make the anonymized output trivially linkable through vector negation or nearest‑neighbour search. Rosberg’s thesis documents this phenomenon empirically and shows that naive “maximal identity shift” strategies can worsen privacy rather than improve it.

This insight has implications beyond model design. It suggests that anonymization claims cannot be divorced from the specific biometric representations used during generation or evaluation. For regulators and standards bodies, this complicates the notion of technology‑neutral anonymization thresholds. It also raises questions for procurement and auditing: anonymization performance is inseparable from the choice of identity encoder, its geometric constraints, and the evaluation protocol applied.

Attribute Retention as a First‑Class Metric

A defining contribution of the thesis is the systematic treatment of attribute retention as a measurable, model‑agnostic objective rather than a qualitative side effect. Through the Interpreted Feature Similarity Regularization loss, Rosberg demonstrates that intermediate layers of face recognition models encode structure beyond identity, including pose, occlusion, and expression. By explicitly regularizing similarity in these layers while shifting the final identity representation, target‑oriented systems can preserve facial behavior with far greater stability.

This approach reframes how attribute preservation is validated. Instead of relying on pixel metrics or heuristic perceptual losses, the thesis aligns evaluation with the same representation spaces already trusted in biometric systems. Expression is assessed through learned embeddings and later through 3D morphable model coefficients. Pose is quantified using both deep estimators and parametric head models. Gaze is treated as a critical sub‑attribute, evaluated through eye landmark heatmaps and reinforced during training using an eye similarity discriminator.

For industry stakeholders, this signals a maturation of the field. Attribute preservation is no longer anecdotal or application‑specific. It can be benchmarked, audited, and optimized across datasets such as FaceForensics++, CelebA, and LFW. For standards organizations such as ISO IEC JTC 1 SC 42, IEEE, and NIST, the work illustrates a potential basis for defining utility preservation requirements alongside privacy metrics.

Reconstruction Attacks and the Question of Reversibility

Perhaps the most consequential finding in the thesis is the identification and demonstration of reconstruction attacks against target‑oriented de‑identification systems. By training adversarial models on paired original and anonymized images, Rosberg shows that identity can be recovered from outputs that appear fully anonymized to both humans and standard face recognition tests. These attacks exploit imperceptible residual cues left by the generative process, revealing that visual realism and biometric unlinkability are insufficient proxies for irreversibility.

This result shifts the threat model for de‑identification. Anonymization is no longer merely about preventing direct matching, but about preventing learned inversion. The parallels drawn with federated learning and gradient leakage attacks highlight a convergence between privacy vulnerabilities in distributed training and those in generative media pipelines.

For policy actors, this directly challenges categorical statements that generative replacement inherently produces anonymous data. For vendors, it introduces new liability considerations around secondary use and data sharing. For evaluators and certification bodies, it underscores the need to test anonymization systems against active adversaries rather than static benchmarks.

Hybrid Diffusion as a Defensive Strategy

The thesis culminates in the HYDRO architecture, which integrates a one‑step diffusion process into a target‑oriented de‑identification pipeline. The diffusion step injects controlled noise to destroy residual identity traces, while a learned denoising stage restores visual fidelity and attribute structure. This hybrid design achieves what prior methods could not: a measurable reduction in reconstruction attack success rates without sacrificing gaze, expression, or pose retention.

Technically, this represents a synthesis of two previously competing paradigms. Target‑oriented systems excel at attribute preservation but leak information. Diffusion models excel at distributional reshaping but are computationally expensive and often discard fine‑grained semantics. HYDRO demonstrates that selective diffusion, applied after identity manipulation rather than as a full generative process, can deliver non‑reversibility with bounded overhead.

From an industry perspective, the computational cost remains non‑trivial, particularly for real‑time video processing. Yet the direction is clear. As attacks improve and privacy expectations rise, defensive depth in anonymization pipelines may become unavoidable in regulated environments, particularly in transport, biometric analytics, and public‑sector data sharing.

Implications for Policy, Industry, and Standards

Rosberg’s thesis does not argue for regulatory change, but it implicitly reframes how existing frameworks may be interpreted. Regulations such as GDPR, CCPA, and comparable regimes rely on a distinction between personal data and anonymized data. This work shows that the boundary is technically porous and contingent on adversarial assumptions, model choice, and evaluation rigor.

For global standardization efforts, the thesis provides a concrete technical substrate from which test protocols, threat models, and reporting requirements could evolve. For industry leaders, it offers a cautionary account of overconfident anonymization claims and a roadmap for more robust implementations. For policymakers, it underscores that face de‑identification is no longer a solved problem delegated to simple preprocessing, but an active area of security‑sensitive system design.

In this sense, the thesis documents not just a set of algorithms, but a discipline in transition. Face de‑identification is becoming an engineering problem with explicit trade‑offs, attack surfaces, and performance boundaries. As visual data continues to scale across sectors, the questions it raises will increasingly shape how privacy is operationalized in practice.

References

Rosberg, F. (2025). Non‑reversible and attribute preserving face de‑identification (Doctoral dissertation, Halmstad University).

Deng, J., Guo, J., Xue, N., & Zafeiriou, S. (2019). ArcFace: Additive angular margin loss for deep face recognition. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition.

Wang, H., Wang, Y., Zhou, Z., Ji, X., Gong, D., Zhou, J., Li, Z., & Liu, W. (2018). CosFace: Large margin cosine loss for deep face recognition. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition.

Ho, J., Jain, A., & Abbeel, P. (2020). Denoising diffusion probabilistic models. Advances in Neural Information Processing Systems.

Rossler, A., Cozzolino, D., Verdoliva, L., Riess, C., Thies, J., & Niessner, M. (2019). FaceForensics++: Learning to detect manipulated facial images. Proceedings of the IEEE International Conference on Computer Vision.

Hukkelås, H., Mester, R., & Lindseth, F. (2019). DeepPrivacy: A generative adversarial network for face anonymization. Springer Advances in Visual Computing.

Guo, J., Deng, J., & Zafeiriou, S. (2021). ElasticFace: Elastic margin loss for deep face recognition. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition.